Feature Extraction Workflow

This example shows a complete workflow for feature extraction. The example uses the humanactivity data set, which has 60 predictors and tens of thousands of data samples.

Load and Examine Data

Load the humanactivity data, which is available when you run this example.

load humanactivityView a description of the data.

Description

Description = 29×1 string

" === Human Activity Data === "

" "

" The humanactivity data set contains 24,075 observations of five different "

" physical human activities: Sitting, Standing, Walking, Running, and "

" Dancing. Each observation has 60 features extracted from acceleration "

" data measured by smartphone accelerometer sensors. The data set contains "

" the following variables: "

" "

" * actid - Response vector containing the activity IDs in integers: 1, 2, "

" 3, 4, and 5 representing Sitting, Standing, Walking, Running, and "

" Dancing, respectively "

" * actnames - Activity names corresponding to the integer activity IDs "

" * feat - Feature matrix of 60 features for 24,075 observations "

" * featlabels - Labels of the 60 features "

" "

" The Sensor HAR (human activity recognition) App [1] was used to create "

" the humanactivity data set. When measuring the raw acceleration data with "

" this app, a person placed a smartphone in a pocket so that the smartphone "

" was upside down and the screen faced toward the person. The software then "

" calibrated the measured raw data accordingly and extracted the 60 "

" features from the calibrated data. For details about the calibration and "

" feature extraction, see [2] and [3], respectively. "

" "

" [1] El Helou, A. Sensor HAR recognition App. bat365 File Exchange "

" http:/matlabcentral/fileexchange/54138-sensor-har-recognition-app "

" [2] STMicroelectronics, AN4508 Application note. “Parameters and "

" calibration of a low-g 3-axis accelerometer.” 2014. "

" [3] El Helou, A. Sensor Data Analytics. bat365 File Exchange "

" /matlabcentral/fileexchange/54139-sensor-data-analytics--french-webinar-code- "

The data set is organized by activity type. To better represent a random set of data, shuffle the rows.

n = numel(actid); % Number of data points rng(1) % For reproducibility idx = randsample(n,n); % Shuffle X = feat(idx,:); % The corresponding labels are in actid(idx) Labels = actid(idx);

View the activities and corresponding labels.

tbl = table(["1";"2";"3";"4";"5"],... ["Sitting";"Standing";"Walking";"Running";"Dancing"],... 'VariableNames',{'Label' 'Activity'}); disp(tbl)

Label Activity

_____ __________

"1" "Sitting"

"2" "Standing"

"3" "Walking"

"4" "Running"

"5" "Dancing"

Set up the data for cross-validation. Use cvpartition to create training and validation sets from the data.

c = cvpartition(n,"HoldOut",0.1);

idxtrain = training(c);

Xtrain = X(idxtrain,:);

LabelTrain = Labels(idxtrain);

idxtest = test(c);

Xtest = X(idxtest,:);

LabelTest = Labels(idxtest);Choose New Feature Dimensions

There are several considerations in choosing the number of features to extract:

More features use more memory and computational time.

Fewer features can produce a poor classifier.

To begin, choose 5 features. Later you will see the effects of using more features.

q = 5;

Extract Features

There are two feature extraction functions, sparsefilt and rica. Begin with the sparsefilt function. Set the number of iterations to 10 so that the extraction does not take too long.

Typically, you get good results by running the sparsefilt algorithm for a few iterations to a few hundred iterations. Running the algorithm for too many iterations can lead to decreased classification accuracy, a type of overfitting problem.

Use sparsefilt to obtain the sparse filtering model while using 10 iterations.

tic

Mdl = sparsefilt(Xtrain,q,'IterationLimit',10);Warning: Solver LBFGS was not able to converge to a solution.

toc

Elapsed time is 0.116473 seconds.

sparsefilt warns that the internal LBFGS optimizer did not converge. The optimizer did not converge, at least in part because you set the iteration limit to 10. Nevertheless, you can use the result to train a classifier.

Create Classifier

Transform the original data into the new feature representation.

NewX = transform(Mdl,Xtrain);

Train a linear classifier based on the transformed data and the correct classification labels in LabelTrain. The accuracy of the learned model is sensitive to the fitcecoc regularization parameter Lambda. Try to find the best value for Lambda by using the OptimizeHyperparameters name-value pair. Be aware that this optimization takes time. If you have a Parallel Computing Toolbox™ license, use parallel computing for faster execution. If you don't have a parallel license, remove the UseParallel calls before running this script.

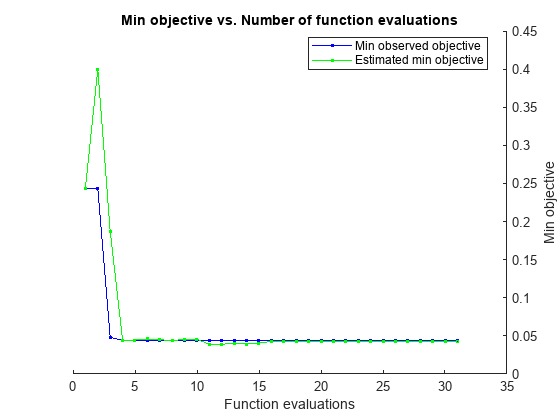

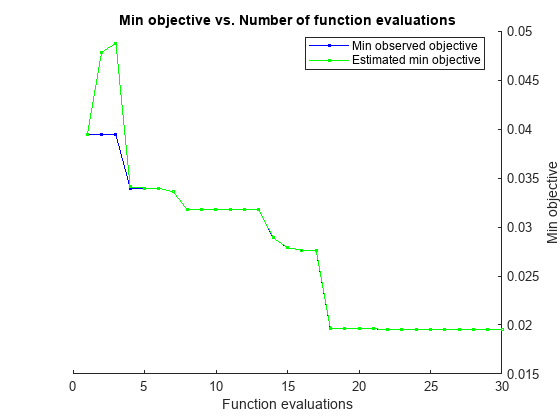

t = templateLinear('Solver','lbfgs'); options = struct('UseParallel',true); tic Cmdl = fitcecoc(NewX,LabelTrain,Learners=t, ... OptimizeHyperparameters="auto",... HyperparameterOptimizationOptions=options);

Copying objective function to workers... Done copying objective function to workers. |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 1 | 6 | Best | 0.24372 | 4.6976 | 0.24372 | 0.24372 | onevsall | 3.9324 | svm | | 2 | 6 | Accept | 0.56743 | 6.3649 | 0.24372 | 0.40007 | onevsone | 0.12409 | logistic | | 3 | 6 | Best | 0.047905 | 5.6082 | 0.047905 | 0.18708 | onevsall | 3.0428e-08 | svm | | 4 | 5 | Best | 0.044259 | 5.9896 | 0.044259 | 0.044417 | onevsone | 2.3997e-09 | svm | | 5 | 5 | Accept | 0.27949 | 5.8778 | 0.044259 | 0.044417 | onevsall | 0.0026915 | logistic | | 6 | 6 | Accept | 0.27072 | 1.4726 | 0.044259 | 0.046079 | onevsall | 4.6009 | svm | | 7 | 6 | Accept | 0.044951 | 2.6811 | 0.044259 | 0.045078 | onevsone | 8.4641e-07 | svm | | 8 | 6 | Accept | 0.047074 | 2.4291 | 0.044259 | 0.043721 | onevsall | 4.6737e-10 | svm | | 9 | 5 | Accept | 0.048782 | 2.2619 | 0.044259 | 0.045714 | onevsall | 4.188e-07 | svm | | 10 | 5 | Accept | 0.74128 | 1.3176 | 0.044259 | 0.045714 | onevsone | 4.6111 | svm | | 11 | 4 | Accept | 0.047951 | 9.4874 | 0.044259 | 0.039012 | onevsall | 2.5237e-09 | logistic | | 12 | 4 | Accept | 0.044259 | 2.4651 | 0.044259 | 0.039012 | onevsone | 4.639e-10 | svm | | 13 | 6 | Accept | 0.047305 | 2.1181 | 0.044259 | 0.04016 | onevsall | 4.6238e-10 | svm | | 14 | 6 | Accept | 0.13762 | 1.563 | 0.044259 | 0.039295 | onevsall | 0.000197 | svm | | 15 | 6 | Best | 0.044213 | 2.2286 | 0.044213 | 0.040603 | onevsone | 3.2212e-07 | svm | | 16 | 6 | Best | 0.044028 | 2.1986 | 0.044028 | 0.042724 | onevsone | 3.3084e-08 | svm | | 17 | 6 | Accept | 0.047397 | 2.2898 | 0.044028 | 0.042701 | onevsall | 7.0046e-09 | svm | | 18 | 6 | Best | 0.043982 | 2.2335 | 0.043982 | 0.04234 | onevsone | 4.8906e-08 | svm | | 19 | 6 | Accept | 0.044074 | 4.8905 | 0.043982 | 0.0426 | onevsone | 4.618e-10 | logistic | | 20 | 5 | Accept | 0.049889 | 4.6319 | 0.043982 | 0.042666 | onevsall | 1.5731e-07 | logistic | |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 21 | 5 | Accept | 0.044074 | 2.3749 | 0.043982 | 0.042666 | onevsone | 9.2673e-08 | svm | | 22 | 6 | Accept | 0.047397 | 5.9207 | 0.043982 | 0.042595 | onevsall | 4.6968e-10 | logistic | | 23 | 6 | Accept | 0.044074 | 2.0958 | 0.043982 | 0.042706 | onevsone | 4.7064e-10 | svm | | 24 | 6 | Accept | 0.74128 | 1.3176 | 0.043982 | 0.042866 | onevsall | 4.6104 | logistic | | 25 | 5 | Accept | 0.044074 | 2.3518 | 0.043982 | 0.043111 | onevsone | 1.6131e-07 | svm | | 26 | 5 | Accept | 0.04412 | 2.2526 | 0.043982 | 0.043111 | onevsone | 4.951e-09 | svm | | 27 | 6 | Accept | 0.044397 | 4.4049 | 0.043982 | 0.04312 | onevsone | 1.9221e-08 | logistic | | 28 | 6 | Accept | 0.33889 | 1.6083 | 0.043982 | 0.043246 | onevsone | 0.0057941 | svm | | 29 | 6 | Accept | 0.048689 | 5.0386 | 0.043982 | 0.043249 | onevsall | 1.8172e-08 | logistic | | 30 | 5 | Accept | 0.047397 | 6.426 | 0.043982 | 0.043243 | onevsall | 4.6642e-10 | logistic | | 31 | 5 | Accept | 0.1882 | 1.4843 | 0.043982 | 0.043243 | onevsall | 0.031302 | svm |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 31

Total elapsed time: 23.9334 seconds

Total objective function evaluation time: 108.0825

Best observed feasible point:

Coding Lambda Learner

________ __________ _______

onevsone 4.8906e-08 svm

Observed objective function value = 0.043982

Estimated objective function value = 0.043668

Function evaluation time = 2.2335

Best estimated feasible point (according to models):

Coding Lambda Learner

________ __________ _______

onevsone 1.6131e-07 svm

Estimated objective function value = 0.043243

Estimated function evaluation time = 2.4171

toc

Elapsed time is 25.690360 seconds.

Evaluate Classifier

Check the error of the classifier when applied to test data.

TestX = transform(Mdl,Xtest); Loss = loss(Cmdl,TestX,LabelTest)

Loss = 0.0489

Did this transformation result in a better classifier than one trained on the original data? Create a classifier based on the original training data and evaluate its loss.

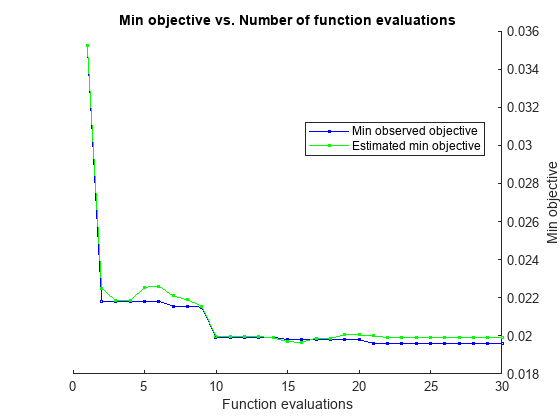

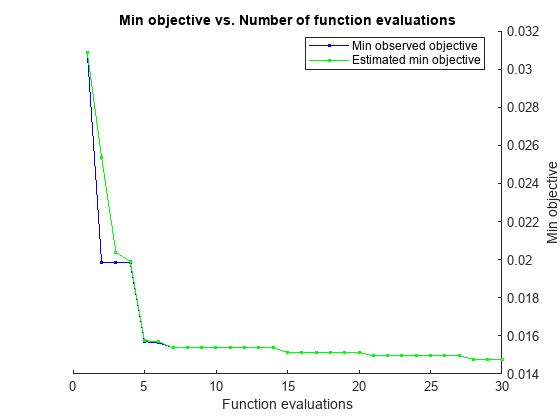

tic Omdl = fitcecoc(Xtrain,LabelTrain,Learners=t, ... OptimizeHyperparameters="auto",... HyperparameterOptimizationOptions=options);

Copying objective function to workers...

Warning: Files that have already been attached are being ignored. To see which files are attached see the 'AttachedFiles' property of the parallel pool.

Done copying objective function to workers. |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 1 | 6 | Best | 0.035259 | 5.5107 | 0.035259 | 0.035259 | onevsone | 0.77518 | svm | | 2 | 6 | Best | 0.021829 | 8.7406 | 0.021829 | 0.022507 | onevsone | 1.4423e-09 | svm | | 3 | 6 | Accept | 0.03729 | 13.605 | 0.021829 | 0.021838 | onevsall | 0.6103 | svm | | 4 | 6 | Accept | 0.022383 | 8.5819 | 0.021829 | 0.021836 | onevsone | 4.1356e-09 | svm | | 5 | 6 | Accept | 0.024045 | 7.7692 | 0.021829 | 0.02255 | onevsone | 4.6679e-10 | svm | | 6 | 6 | Accept | 0.040198 | 8.308 | 0.021829 | 0.022615 | onevsall | 4.6023 | logistic | | 7 | 6 | Best | 0.021553 | 7.829 | 0.021553 | 0.0221 | onevsone | 7.3067e-08 | svm | | 8 | 6 | Accept | 0.021829 | 7.6416 | 0.021553 | 0.021892 | onevsone | 5.8054e-09 | svm | | 9 | 6 | Best | 0.021506 | 9.0909 | 0.021506 | 0.021558 | onevsone | 8.1397e-08 | svm | | 10 | 6 | Best | 0.019937 | 33.993 | 0.019937 | 0.019992 | onevsone | 0.0001022 | logistic | | 11 | 6 | Accept | 0.021091 | 8.4908 | 0.019937 | 0.019984 | onevsone | 3.4715e-08 | svm | | 12 | 6 | Accept | 0.019937 | 46.814 | 0.019937 | 0.019961 | onevsone | 1.569e-05 | logistic | | 13 | 6 | Accept | 0.022152 | 6.9556 | 0.019937 | 0.019965 | onevsone | 3.7097e-08 | svm | | 14 | 6 | Accept | 0.039275 | 7.372 | 0.019937 | 0.019897 | onevsone | 1.0643 | logistic | | 15 | 6 | Best | 0.019799 | 53.215 | 0.019799 | 0.019695 | onevsone | 1.1332e-07 | logistic | | 16 | 6 | Accept | 0.022568 | 8.594 | 0.019799 | 0.019662 | onevsone | 2.0178e-05 | svm | | 17 | 6 | Accept | 0.020906 | 20.329 | 0.019799 | 0.019877 | onevsone | 0.00079115 | logistic | | 18 | 6 | Accept | 0.023814 | 43.531 | 0.019799 | 0.019875 | onevsall | 4.6349e-10 | svm | | 19 | 6 | Accept | 0.020353 | 45.355 | 0.019799 | 0.020057 | onevsone | 2.5069e-05 | logistic | | 20 | 6 | Accept | 0.020214 | 46.906 | 0.019799 | 0.020048 | onevsone | 4.64e-10 | logistic | |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 21 | 6 | Best | 0.019614 | 51.847 | 0.019614 | 0.02 | onevsone | 1.5863e-06 | logistic | | 22 | 6 | Accept | 0.020168 | 49.186 | 0.019614 | 0.01991 | onevsone | 3.644e-07 | logistic | | 23 | 6 | Accept | 0.022845 | 130.99 | 0.019614 | 0.019909 | onevsall | 3.2522e-09 | logistic | | 24 | 6 | Accept | 0.024691 | 45.677 | 0.019614 | 0.01991 | onevsall | 1.0458e-06 | svm | | 25 | 6 | Accept | 0.020629 | 46.99 | 0.019614 | 0.019901 | onevsone | 7.1125e-09 | logistic | | 26 | 6 | Accept | 0.02026 | 47.407 | 0.019614 | 0.019899 | onevsone | 3.5513e-08 | logistic | | 27 | 6 | Accept | 0.020168 | 48.879 | 0.019614 | 0.019912 | onevsone | 4.9086e-09 | logistic | | 28 | 6 | Accept | 0.02026 | 46.764 | 0.019614 | 0.019909 | onevsone | 1.3482e-08 | logistic | | 29 | 6 | Accept | 0.02003 | 46.455 | 0.019614 | 0.019916 | onevsone | 3.6929e-09 | logistic | | 30 | 6 | Accept | 0.024229 | 8.5617 | 0.019614 | 0.019905 | onevsone | 0.0091116 | svm |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 190.1928 seconds

Total objective function evaluation time: 921.392

Best observed feasible point:

Coding Lambda Learner

________ __________ ________

onevsone 1.5863e-06 logistic

Observed objective function value = 0.019614

Estimated objective function value = 0.019832

Function evaluation time = 51.8468

Best estimated feasible point (according to models):

Coding Lambda Learner

________ _________ ________

onevsone 3.644e-07 logistic

Estimated objective function value = 0.019905

Estimated function evaluation time = 51.7579

toc

Elapsed time is 195.893143 seconds.

Losso = loss(Omdl,Xtest,LabelTest)

Losso = 0.0177

The classifier based on sparse filtering has a higher loss than the classifier based on the original data. However, the classifier uses only 5 features rather than the 60 features in the original data, and is much faster to create. Try to make a better sparse filtering classifier by increasing q from 5 to 20, which is still less than the 60 features in the original data.

q = 20;

Mdl2 = sparsefilt(Xtrain,q,'IterationLimit',10);Warning: Solver LBFGS was not able to converge to a solution.

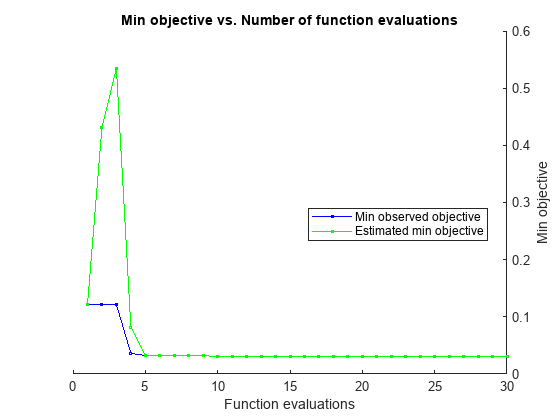

NewX = transform(Mdl2,Xtrain); TestX = transform(Mdl2,Xtest); tic Cmdl = fitcecoc(NewX,LabelTrain,Learners=t, ... OptimizeHyperparameters="auto",... HyperparameterOptimizationOptions=options);

Copying objective function to workers...

Warning: Files that have already been attached are being ignored. To see which files are attached see the 'AttachedFiles' property of the parallel pool.

Done copying objective function to workers. |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 1 | 6 | Best | 0.12147 | 1.2383 | 0.12147 | 0.12147 | onevsall | 0.39835 | svm | | 2 | 6 | Accept | 0.74128 | 1.4354 | 0.12147 | 0.43121 | onevsall | 2.244 | logistic | | 3 | 6 | Accept | 0.74128 | 1.5455 | 0.12147 | 0.53465 | onevsone | 4.6046 | svm | | 4 | 6 | Best | 0.036136 | 3.915 | 0.036136 | 0.082195 | onevsall | 2.1658e-06 | svm | | 5 | 6 | Best | 0.032352 | 5.1021 | 0.032352 | 0.032396 | onevsone | 1.3434e-07 | svm | | 6 | 6 | Accept | 0.040151 | 3.7664 | 0.032352 | 0.032389 | onevsone | 2.0976e-05 | logistic | | 7 | 6 | Accept | 0.045459 | 2.0148 | 0.032352 | 0.032413 | onevsall | 0.00083838 | svm | | 8 | 6 | Accept | 0.032352 | 8.7656 | 0.032352 | 0.032422 | onevsall | 4.4817e-08 | svm | | 9 | 6 | Accept | 0.74128 | 1.6885 | 0.032352 | 0.0324 | onevsone | 4.6093 | logistic | | 10 | 6 | Best | 0.030183 | 5.2918 | 0.030183 | 0.03016 | onevsone | 6.8351e-10 | svm | | 11 | 6 | Accept | 0.032583 | 11.351 | 0.030183 | 0.03017 | onevsone | 7.561e-08 | logistic | | 12 | 6 | Accept | 0.038213 | 2.6803 | 0.030183 | 0.030243 | onevsone | 3.8304e-05 | svm | | 13 | 6 | Accept | 0.032075 | 8.8183 | 0.030183 | 0.030252 | onevsall | 4.7331e-10 | svm | | 14 | 6 | Accept | 0.039321 | 1.7803 | 0.030183 | 0.030264 | onevsall | 4.8211e-05 | svm | | 15 | 6 | Accept | 0.035259 | 2.9937 | 0.030183 | 0.030191 | onevsone | 3.6175e-06 | svm | | 16 | 5 | Accept | 0.040521 | 5.4636 | 0.030183 | 0.030185 | onevsall | 1.224e-05 | logistic | | 17 | 5 | Accept | 0.036275 | 5.0559 | 0.030183 | 0.030185 | onevsone | 1.7186e-06 | logistic | | 18 | 6 | Accept | 0.13827 | 0.90957 | 0.030183 | 0.030183 | onevsall | 4.6063 | svm | | 19 | 6 | Accept | 0.030598 | 4.5358 | 0.030183 | 0.030251 | onevsone | 6.1338e-09 | svm | | 20 | 6 | Accept | 0.058289 | 2.5512 | 0.030183 | 0.030239 | onevsall | 0.00088491 | logistic | |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 21 | 6 | Accept | 0.10919 | 2.3477 | 0.030183 | 0.030234 | onevsone | 0.0027425 | logistic | | 22 | 6 | Accept | 0.031475 | 8.4684 | 0.030183 | 0.030235 | onevsall | 3.4821e-09 | svm | | 23 | 6 | Accept | 0.045274 | 3.9578 | 0.030183 | 0.030226 | onevsall | 0.00010652 | logistic | | 24 | 6 | Accept | 0.045782 | 1.9671 | 0.030183 | 0.030202 | onevsone | 0.00067026 | svm | | 25 | 6 | Accept | 0.031244 | 20.475 | 0.030183 | 0.030201 | onevsone | 4.6681e-10 | logistic | | 26 | 6 | Best | 0.029952 | 4.7633 | 0.029952 | 0.029927 | onevsone | 1.8406e-09 | svm | | 27 | 6 | Best | 0.029814 | 4.8472 | 0.029814 | 0.029915 | onevsone | 4.6362e-10 | svm | | 28 | 6 | Accept | 0.036229 | 9.8727 | 0.029814 | 0.029915 | onevsall | 7.1397e-07 | logistic | | 29 | 6 | Accept | 0.033413 | 3.7575 | 0.029814 | 0.029921 | onevsone | 6.2968e-07 | svm | | 30 | 6 | Accept | 0.033967 | 22.019 | 0.029814 | 0.029923 | onevsall | 7.3548e-08 | logistic |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 39.9471 seconds

Total objective function evaluation time: 163.3793

Best observed feasible point:

Coding Lambda Learner

________ __________ _______

onevsone 4.6362e-10 svm

Observed objective function value = 0.029814

Estimated objective function value = 0.030009

Function evaluation time = 4.8472

Best estimated feasible point (according to models):

Coding Lambda Learner

________ __________ _______

onevsone 6.8351e-10 svm

Estimated objective function value = 0.029923

Estimated function evaluation time = 4.94

toc

Elapsed time is 41.581666 seconds.

Loss2 = loss(Cmdl,TestX,LabelTest)

Loss2 = 0.0320

This time the classification loss is lower than for the 5 feature classifier, but is still higher than the loss for the original data classifier. Again, software takes less time to create the classifier for 20 predictors than the classifier for the full data.

Try RICA

Try the other feature extraction function, rica. Extract 20 features, create a classifier, and examine its loss on the test data. Use more iterations for the rica function, because rica can perform better with more iterations than sparsefilt uses.

Often prior to feature extraction, you "prewhiten" the input data as a data preprocessing step. The prewhitening step includes two transforms, decorrelation and standardization, which make the predictors have zero mean and identity covariance. rica supports only the standardization transform. You use the Standardize name-value pair argument to make the predictors have zero mean and unit variance. Alternatively, you can transform images for contrast normalization individually by applying the zscore transformation before calling sparsefilt or rica.

Mdl3 = rica(Xtrain,q,'IterationLimit',400,'Standardize',true);

Warning: Solver LBFGS was not able to converge to a solution.

NewX = transform(Mdl3,Xtrain); TestX = transform(Mdl3,Xtest); tic Cmdl = fitcecoc(NewX,LabelTrain,Learners=t, ... OptimizeHyperparameters="auto",... HyperparameterOptimizationOptions=options);

Copying objective function to workers...

Warning: Files that have already been attached are being ignored. To see which files are attached see the 'AttachedFiles' property of the parallel pool.

Done copying objective function to workers. |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 1 | 6 | Best | 0.048689 | 1.7274 | 0.048689 | 0.048689 | onevsone | 0.49772 | svm | | 2 | 6 | Accept | 0.062442 | 2.2582 | 0.048689 | 0.055562 | onevsone | 0.45646 | logistic | | 3 | 6 | Best | 0.032075 | 2.5078 | 0.032075 | 0.047435 | onevsone | 0.014644 | svm | | 4 | 4 | Accept | 0.035998 | 3.1521 | 0.025522 | 0.040067 | onevsall | 3.8151e-05 | svm | | 5 | 4 | Best | 0.025522 | 3.3093 | 0.025522 | 0.040067 | onevsone | 2.2518e-09 | svm | | 6 | 4 | Accept | 0.035675 | 3.1199 | 0.025522 | 0.040067 | onevsall | 1.2518e-09 | svm | | 7 | 6 | Accept | 0.077164 | 1.6918 | 0.025522 | 0.025637 | onevsone | 3.067 | svm | | 8 | 6 | Accept | 0.026445 | 3.2975 | 0.025522 | 0.031634 | onevsone | 0.0012412 | svm | | 9 | 6 | Accept | 0.025568 | 3.4501 | 0.025522 | 0.027235 | onevsone | 4.6552e-10 | svm | | 10 | 6 | Accept | 0.025614 | 3.42 | 0.025522 | 0.025585 | onevsone | 4.677e-10 | svm | | 11 | 6 | Accept | 0.025706 | 3.2351 | 0.025522 | 0.02559 | onevsone | 4.5709e-09 | svm | | 12 | 6 | Accept | 0.027783 | 2.7219 | 0.025522 | 0.02559 | onevsone | 0.0031183 | svm | | 13 | 6 | Accept | 0.025614 | 3.1778 | 0.025522 | 0.02559 | onevsone | 0.0001457 | svm | | 14 | 6 | Accept | 0.025568 | 3.0303 | 0.025522 | 0.02559 | onevsone | 1.4458e-07 | svm | | 15 | 6 | Accept | 0.027968 | 3.7776 | 0.025522 | 0.02559 | onevsone | 0.00021934 | logistic | | 16 | 6 | Accept | 0.035398 | 2.7932 | 0.025522 | 0.02559 | onevsall | 2.0683e-07 | svm | | 17 | 6 | Accept | 0.052151 | 1.5232 | 0.025522 | 0.02559 | onevsall | 0.13918 | svm | | 18 | 6 | Accept | 0.026214 | 11.715 | 0.025522 | 0.02559 | onevsone | 7.7428e-08 | logistic | | 19 | 6 | Accept | 0.16042 | 1.847 | 0.025522 | 0.025488 | onevsall | 4.6082 | logistic | | 20 | 6 | Accept | 0.033783 | 12.694 | 0.025522 | 0.025591 | onevsall | 4.6182e-10 | logistic | |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 21 | 6 | Best | 0.025475 | 4.0126 | 0.025475 | 0.02559 | onevsone | 3.7723e-06 | svm | | 22 | 6 | Accept | 0.038351 | 4.3279 | 0.025475 | 0.02559 | onevsall | 0.0010239 | logistic | | 23 | 6 | Accept | 0.12239 | 1.0595 | 0.025475 | 0.025591 | onevsall | 4.5914 | svm | | 24 | 6 | Accept | 0.026214 | 6.1461 | 0.025475 | 0.025591 | onevsone | 1.0019e-05 | logistic | | 25 | 6 | Accept | 0.037613 | 2.0165 | 0.025475 | 0.025591 | onevsall | 0.0031581 | svm | | 26 | 6 | Accept | 0.034936 | 2.4126 | 0.025475 | 0.02559 | onevsone | 0.0056673 | logistic | | 27 | 6 | Accept | 0.034336 | 6.0477 | 0.025475 | 0.025591 | onevsall | 2.7728e-05 | logistic | | 28 | 6 | Accept | 0.033967 | 10.413 | 0.025475 | 0.025591 | onevsall | 6.8407e-07 | logistic | | 29 | 6 | Accept | 0.02626 | 8.3859 | 0.025475 | 0.02559 | onevsone | 8.783e-07 | logistic | | 30 | 6 | Accept | 0.035582 | 2.8227 | 0.025475 | 0.02559 | onevsall | 1.3589e-08 | svm |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 27.1224 seconds

Total objective function evaluation time: 122.0946

Best observed feasible point:

Coding Lambda Learner

________ __________ _______

onevsone 3.7723e-06 svm

Observed objective function value = 0.025475

Estimated objective function value = 0.025475

Function evaluation time = 4.0126

Best estimated feasible point (according to models):

Coding Lambda Learner

________ _________ _______

onevsone 4.677e-10 svm

Estimated objective function value = 0.02559

Estimated function evaluation time = 3.4172

toc

Elapsed time is 28.228099 seconds.

Loss3 = loss(Cmdl,TestX,LabelTest)

Loss3 = 0.0275

The rica-based classifier has similar test loss as the 20-feature sparse filtering classifier. The classifier is relatively fast to create.

Try More Features

The feature extraction functions have few tuning parameters. One parameter that can affect results is the number of requested features. See how well classifiers work when based on 100 features, rather than the 20 features previously tried, or the 60 features in the original data. Using more features than appear in the original data is called "overcomplete" learning. Conversely, using fewer features is called "undercomplete" learning. Overcomplete learning can lead to increased classification accuracy, while undercomplete learning can save memory and time.

q = 100;

Mdl4 = sparsefilt(Xtrain,q,'IterationLimit',10);Warning: Solver LBFGS was not able to converge to a solution.

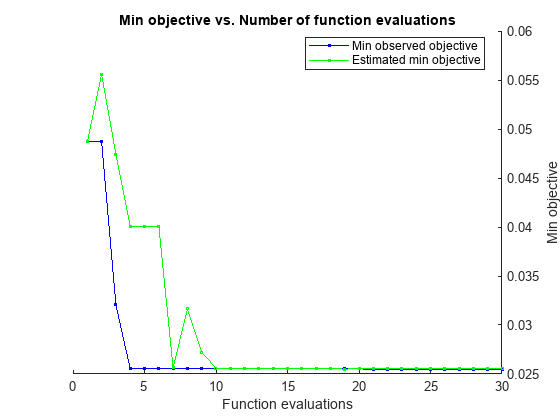

NewX = transform(Mdl4,Xtrain); TestX = transform(Mdl4,Xtest); tic Cmdl = fitcecoc(NewX,LabelTrain,Learners=t, ... OptimizeHyperparameters="auto",... HyperparameterOptimizationOptions=options);

Copying objective function to workers...

Warning: Files that have already been attached are being ignored. To see which files are attached see the 'AttachedFiles' property of the parallel pool.

Done copying objective function to workers. |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 1 | 6 | Best | 0.039413 | 7.4097 | 0.039413 | 0.039413 | onevsone | 9.6788e-05 | logistic | | 2 | 6 | Accept | 0.056258 | 8.2736 | 0.039413 | 0.047834 | onevsone | 0.0023908 | svm | | 3 | 6 | Accept | 0.050535 | 9.9138 | 0.039413 | 0.048735 | onevsall | 0.0001608 | logistic | | 4 | 6 | Best | 0.033967 | 13.569 | 0.033967 | 0.034082 | onevsone | 9.9249e-05 | svm | | 5 | 6 | Accept | 0.039413 | 7.9397 | 0.033967 | 0.033968 | onevsone | 9.6762e-05 | logistic | | 6 | 6 | Accept | 0.034059 | 13.408 | 0.033967 | 0.033968 | onevsone | 0.00010471 | svm | | 7 | 6 | Best | 0.033598 | 12.795 | 0.033598 | 0.033599 | onevsone | 5.9298e-05 | svm | | 8 | 6 | Best | 0.031752 | 32.351 | 0.031752 | 0.031753 | onevsall | 5.3759e-06 | svm | | 9 | 6 | Accept | 0.088841 | 4.3567 | 0.031752 | 0.031753 | onevsone | 0.0029806 | logistic | | 10 | 6 | Accept | 0.032121 | 9.7001 | 0.031752 | 0.031753 | onevsone | 1.4055e-05 | logistic | | 11 | 6 | Accept | 0.74128 | 4.2755 | 0.031752 | 0.03176 | onevsone | 1.1119 | svm | | 12 | 6 | Accept | 0.74128 | 3.1437 | 0.031752 | 0.031767 | onevsone | 1.9263 | logistic | | 13 | 6 | Accept | 0.37322 | 2.9349 | 0.031752 | 0.03177 | onevsall | 0.080099 | logistic | | 14 | 6 | Best | 0.028891 | 20.557 | 0.028891 | 0.028907 | onevsone | 5.6435e-06 | svm | | 15 | 6 | Best | 0.027875 | 16.755 | 0.027875 | 0.027886 | onevsone | 8.1017e-07 | logistic | | 16 | 6 | Best | 0.027644 | 47.389 | 0.027644 | 0.02765 | onevsall | 2.6085e-07 | logistic | | 17 | 6 | Accept | 0.18322 | 4.166 | 0.027644 | 0.027651 | onevsall | 4.6137 | svm | | 18 | 6 | Best | 0.019614 | 45.707 | 0.019614 | 0.019626 | onevsone | 2.2409e-09 | logistic | | 19 | 6 | Accept | 0.18363 | 4.9399 | 0.019614 | 0.019626 | onevsall | 0.085841 | svm | | 20 | 6 | Accept | 0.055058 | 9.7251 | 0.019614 | 0.019626 | onevsall | 0.0011989 | svm | |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 21 | 6 | Accept | 0.035306 | 14.675 | 0.019614 | 0.019625 | onevsall | 1.403e-05 | logistic | | 22 | 6 | Best | 0.019522 | 28.211 | 0.019522 | 0.019531 | onevsone | 7.1633e-10 | svm | | 23 | 6 | Accept | 0.038582 | 17.001 | 0.019522 | 0.01953 | onevsall | 8.8768e-05 | svm | | 24 | 6 | Accept | 0.022245 | 32.142 | 0.019522 | 0.019529 | onevsone | 3.8747e-08 | logistic | | 25 | 6 | Accept | 0.023399 | 31.652 | 0.019522 | 0.01953 | onevsone | 1.4128e-07 | svm | | 26 | 6 | Accept | 0.020168 | 31.645 | 0.019522 | 0.019535 | onevsone | 7.4304e-09 | svm | | 27 | 6 | Accept | 0.022937 | 112.17 | 0.019522 | 0.019535 | onevsall | 1.8485e-09 | svm | | 28 | 6 | Accept | 0.019614 | 27.955 | 0.019522 | 0.01955 | onevsone | 2.0284e-09 | svm | | 29 | 6 | Accept | 0.020122 | 44.919 | 0.019522 | 0.019549 | onevsone | 4.6246e-10 | logistic | | 30 | 6 | Accept | 0.020722 | 38.788 | 0.019522 | 0.019547 | onevsone | 8.9251e-09 | logistic |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 149.9449 seconds

Total objective function evaluation time: 658.4665

Best observed feasible point:

Coding Lambda Learner

________ __________ _______

onevsone 7.1633e-10 svm

Observed objective function value = 0.019522

Estimated objective function value = 0.019563

Function evaluation time = 28.2111

Best estimated feasible point (according to models):

Coding Lambda Learner

________ __________ _______

onevsone 2.0284e-09 svm

Estimated objective function value = 0.019547

Estimated function evaluation time = 29.3667

toc

Elapsed time is 153.432841 seconds.

Loss4 = loss(Cmdl,TestX,LabelTest)

Loss4 = 0.0239

The classifier based on overcomplete sparse filtering with 100 extracted features has low test loss.

Mdl5 = rica(Xtrain,q,'IterationLimit',400,'Standardize',true);

Warning: Solver LBFGS was not able to converge to a solution.

NewX = transform(Mdl5,Xtrain); TestX = transform(Mdl5,Xtest); tic Cmdl = fitcecoc(NewX,LabelTrain,Learners=t, ... OptimizeHyperparameters="auto",... HyperparameterOptimizationOptions=options);

Copying objective function to workers...

Warning: Files that have already been attached are being ignored. To see which files are attached see the 'AttachedFiles' property of the parallel pool.

Done copying objective function to workers. |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 1 | 6 | Best | 0.030875 | 5.8653 | 0.030875 | 0.030875 | onevsone | 0.34902 | svm | | 2 | 6 | Best | 0.019845 | 6.5249 | 0.019845 | 0.025358 | onevsone | 0.0026162 | logistic | | 3 | 6 | Accept | 0.03489 | 4.6883 | 0.019845 | 0.020385 | onevsone | 0.099101 | logistic | | 4 | 6 | Accept | 0.020629 | 15 | 0.019845 | 0.019912 | onevsall | 0.00027131 | logistic | | 5 | 6 | Best | 0.015691 | 16.21 | 0.015691 | 0.015748 | onevsone | 7.8723e-09 | svm | | 6 | 6 | Best | 0.015645 | 11.998 | 0.015645 | 0.015699 | onevsone | 3.5196e-05 | logistic | | 7 | 6 | Best | 0.015368 | 18.798 | 0.015368 | 0.015369 | onevsone | 2.6623e-06 | logistic | | 8 | 6 | Accept | 0.019476 | 6.5533 | 0.015368 | 0.015369 | onevsone | 0.002019 | logistic | | 9 | 6 | Accept | 0.022106 | 19.792 | 0.015368 | 0.015369 | onevsall | 0.0019358 | svm | | 10 | 6 | Accept | 0.11496 | 4.0322 | 0.015368 | 0.01537 | onevsone | 3.4314 | logistic | | 11 | 6 | Accept | 0.021322 | 6.2828 | 0.015368 | 0.015369 | onevsone | 0.0043416 | logistic | | 12 | 6 | Accept | 0.084502 | 4.1352 | 0.015368 | 0.015369 | onevsone | 4.5974 | svm | | 13 | 6 | Accept | 0.015599 | 11.929 | 0.015368 | 0.01537 | onevsone | 5.601e-05 | logistic | | 14 | 6 | Accept | 0.018876 | 14.777 | 0.015368 | 0.01537 | onevsone | 0.0073401 | svm | | 15 | 6 | Best | 0.015138 | 16.286 | 0.015138 | 0.015138 | onevsone | 4.647e-10 | svm | | 16 | 6 | Accept | 0.022799 | 10.342 | 0.015138 | 0.015138 | onevsone | 0.052733 | svm | | 17 | 6 | Accept | 0.015322 | 18.918 | 0.015138 | 0.015139 | onevsone | 1.3043e-07 | svm | | 18 | 6 | Accept | 0.04712 | 4.7692 | 0.015138 | 0.015139 | onevsall | 0.10508 | logistic | | 19 | 6 | Accept | 0.10246 | 4.0277 | 0.015138 | 0.015139 | onevsall | 4.5714 | svm | | 20 | 6 | Accept | 0.15276 | 4.0699 | 0.015138 | 0.01514 | onevsall | 4.5936 | logistic | |==============================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | Coding | Lambda | Learner | | | workers | result | | runtime | (observed) | (estim.) | | | | |==============================================================================================================================| | 21 | 6 | Best | 0.014953 | 26.102 | 0.014953 | 0.014953 | onevsone | 8.0118e-05 | svm | | 22 | 6 | Accept | 0.028291 | 7.1206 | 0.014953 | 0.014953 | onevsall | 0.005946 | logistic | | 23 | 6 | Accept | 0.015876 | 19.702 | 0.014953 | 0.014953 | onevsone | 4.6726e-10 | logistic | | 24 | 6 | Accept | 0.015091 | 23.087 | 0.014953 | 0.014953 | onevsone | 2.6545e-06 | svm | | 25 | 6 | Accept | 0.016845 | 44.235 | 0.014953 | 0.014953 | onevsall | 4.6319e-10 | svm | | 26 | 6 | Accept | 0.017076 | 54.261 | 0.014953 | 0.014953 | onevsall | 4.3174e-07 | logistic | | 27 | 6 | Accept | 0.016799 | 45.904 | 0.014953 | 0.014953 | onevsall | 4.2209e-06 | svm | | 28 | 6 | Best | 0.014768 | 27.573 | 0.014768 | 0.014768 | onevsone | 1.7625e-08 | logistic | | 29 | 6 | Accept | 0.018599 | 33.435 | 0.014768 | 0.014768 | onevsall | 0.00016625 | svm | | 30 | 6 | Accept | 0.017584 | 27.061 | 0.014768 | 0.014768 | onevsall | 1.646e-05 | logistic |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 30 reached.

Total function evaluations: 30

Total elapsed time: 113.968 seconds

Total objective function evaluation time: 513.4789

Best observed feasible point:

Coding Lambda Learner

________ __________ ________

onevsone 1.7625e-08 logistic

Observed objective function value = 0.014768

Estimated objective function value = 0.014768

Function evaluation time = 27.5729

Best estimated feasible point (according to models):

Coding Lambda Learner

________ __________ ________

onevsone 1.7625e-08 logistic

Estimated objective function value = 0.014768

Estimated function evaluation time = 27.1423

toc

Elapsed time is 116.497520 seconds.

Loss5 = loss(Cmdl,TestX,LabelTest)

Loss5 = 0.0158

The classifier based on RICA with 100 extracted features has similar test loss to the classifier based on sparse filtering and 100 features, and takes less than half the time to create as the classifier trained on the original data.

Optimize Hyperparameters by Using bayesopt

Feature extraction functions have these tuning parameters:

Iteration limit

Function, either

ricaorsparsefiltParameter

LambdaNumber of learned features

qCoding, either

onevsoneoronevsall

The fitcecoc regularization parameter also affects the accuracy of the learned classifier. Include that parameter in the list of hyperparameters as well.

To search among the available parameters effectively, try bayesopt. Use the objective function in the supporting file filterica.m, which includes parameters passed from the workspace.

To remove sources of variation, fix an initial transform weight matrix.

W = randn(1e4,1e3);

Create hyperparameters for the objective function.

iterlim = optimizableVariable('iterlim',[5,500],'Type','integer'); lambda = optimizableVariable('lambda',[0,10]); solver = optimizableVariable('solver',{'r','s'},'Type','categorical'); qvar = optimizableVariable('q',[5,100],'Type','integer'); lambdareg = optimizableVariable('lambdareg',[1e-6,1],'Transform','log'); coding = optimizableVariable('coding',{'o','a'},'Type','categorical'); vars = [iterlim,lambda,solver,qvar,lambdareg,coding];

Run the optimization without the warnings that occur when the internal optimizations do not run to completion. Run for 60 iterations instead of the default 30 to give the optimization a better chance of locating a good value.

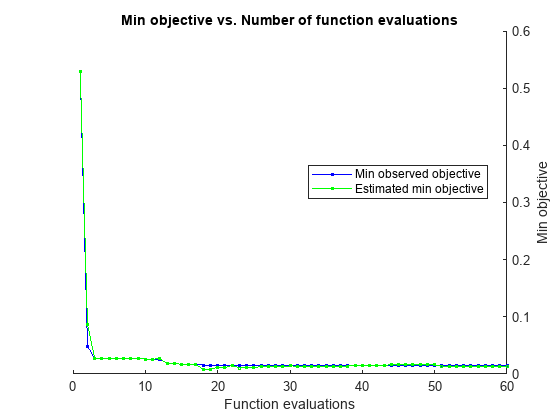

warning('off','stats:classreg:learning:fsutils:Solver:LBFGSUnableToConverge'); tic results = bayesopt(@(x) filterica(x,Xtrain,Xtest,LabelTrain,LabelTest,W),vars, ... 'UseParallel',true,'MaxObjectiveEvaluations',60);

Copying objective function to workers... Done copying objective function to workers. |===========================================================================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | iterlim | lambda | solver | q | lambdareg | coding | | | workers | result | | runtime | (observed) | (estim.) | | | | | | | |===========================================================================================================================================================================| | 1 | 6 | Best | 0.52943 | 4.912 | 0.52943 | 0.52943 | 70 | 9.3497 | s | 22 | 0.030312 | o | | 2 | 6 | Best | 0.048927 | 7.0904 | 0.048927 | 0.086902 | 202 | 0.60409 | r | 9 | 0.010864 | o | | 3 | 6 | Best | 0.027201 | 12.647 | 0.027201 | 0.027592 | 190 | 0.80733 | r | 25 | 0.039332 | o | | 4 | 6 | Accept | 0.035378 | 8.6101 | 0.027201 | 0.027731 | 54 | 3.0477 | r | 61 | 0.77824 | o | | 5 | 6 | Accept | 0.25536 | 30.032 | 0.027201 | 0.027466 | 178 | 6.761 | s | 84 | 0.00066641 | o | | 6 | 6 | Accept | 0.35333 | 32.47 | 0.027201 | 0.027443 | 175 | 2.8287 | s | 92 | 0.0012333 | a | | 7 | 6 | Accept | 0.12827 | 27.117 | 0.027201 | 0.027393 | 325 | 7.3952 | s | 35 | 8.2172e-06 | a | | 8 | 6 | Accept | 0.34755 | 33.96 | 0.027201 | 0.027396 | 266 | 4.0928 | s | 60 | 0.00041521 | a | | 9 | 6 | Accept | 0.078705 | 2.0607 | 0.027201 | 0.027294 | 53 | 1.7616 | r | 7 | 0.73652 | o | | 10 | 6 | Best | 0.026029 | 6.6583 | 0.026029 | 0.026189 | 70 | 5.0943 | r | 36 | 0.30991 | o | | 11 | 6 | Accept | 0.040287 | 0.99791 | 0.026029 | 0.026218 | 9 | 4.8121 | s | 13 | 1.0643e-06 | o | | 12 | 6 | Accept | 0.055786 | 1.2549 | 0.026029 | 0.026247 | 38 | 8.6327 | s | 8 | 1.2675e-05 | o | | 13 | 6 | Best | 0.018814 | 8.233 | 0.018814 | 0.018829 | 25 | 0.0278 | r | 84 | 0.00010178 | o | | 14 | 6 | Accept | 0.53812 | 0.49606 | 0.018814 | 0.018875 | 11 | 0.18468 | r | 5 | 1.3987e-06 | o | | 15 | 6 | Best | 0.016874 | 47.355 | 0.016874 | 0.016839 | 212 | 8.9402 | r | 87 | 0.0039156 | o | | 16 | 6 | Accept | 0.52264 | 17.397 | 0.016874 | 0.016855 | 394 | 2.7795 | s | 19 | 0.007691 | o | | 17 | 6 | Accept | 0.020328 | 3.7038 | 0.016874 | 0.016822 | 8 | 3.5707 | r | 99 | 0.10314 | o | | 18 | 6 | Best | 0.015555 | 7.6923 | 0.015555 | 0.0084954 | 9 | 0.74158 | r | 97 | 0.00043623 | o | | 19 | 6 | Accept | 0.035237 | 17.017 | 0.015555 | 0.0086345 | 331 | 0.63154 | r | 20 | 1.0203e-06 | a | | 20 | 6 | Accept | 0.055538 | 0.73762 | 0.015555 | 0.011825 | 11 | 4.4459 | r | 7 | 0.00020189 | o | |===========================================================================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | iterlim | lambda | solver | q | lambdareg | coding | | | workers | result | | runtime | (observed) | (estim.) | | | | | | | |===========================================================================================================================================================================| | 21 | 6 | Accept | 0.037108 | 1.0492 | 0.015555 | 0.010948 | 20 | 9.5934 | r | 8 | 0.00027665 | o | | 22 | 6 | Accept | 0.041709 | 1.9456 | 0.015555 | 0.015403 | 28 | 9.3857 | s | 17 | 2.994e-06 | o | | 23 | 6 | Accept | 0.018454 | 13.93 | 0.015555 | 0.010483 | 22 | 0.69741 | r | 97 | 1.2894e-05 | a | | 24 | 6 | Accept | 0.028586 | 11.282 | 0.015555 | 0.01139 | 35 | 3.2021 | s | 99 | 1.0644e-06 | o | | 25 | 6 | Accept | 0.039866 | 2.4192 | 0.015555 | 0.010809 | 28 | 9.8094 | s | 23 | 1.0098e-06 | a | | 26 | 6 | Accept | 0.88918 | 49.28 | 0.015555 | 0.013345 | 429 | 0.060872 | r | 52 | 0.0011157 | a | | 27 | 6 | Accept | 0.10786 | 0.53144 | 0.015555 | 0.013969 | 5 | 6.4836 | r | 6 | 1.3566e-06 | a | | 28 | 6 | Accept | 0.017484 | 56.302 | 0.015555 | 0.013962 | 474 | 1.8928 | r | 48 | 0.00063848 | o | | 29 | 6 | Accept | 0.38735 | 35.182 | 0.015555 | 0.013918 | 497 | 6.564 | s | 34 | 0.80739 | a | | 30 | 6 | Accept | 0.044439 | 13.26 | 0.015555 | 0.014003 | 497 | 5.7095 | r | 10 | 1.6015e-05 | a | | 31 | 6 | Accept | 0.033871 | 1.1249 | 0.015555 | 0.013967 | 18 | 7.7947 | r | 13 | 8.166e-06 | a | | 32 | 6 | Accept | 0.74128 | 1.6253 | 0.015555 | 0.013465 | 38 | 2.435 | s | 15 | 0.98978 | o | | 33 | 6 | Accept | 0.32977 | 19.047 | 0.015555 | 0.013292 | 497 | 7.4015 | s | 18 | 1.2428e-06 | o | | 34 | 6 | Accept | 0.13391 | 0.52899 | 0.015555 | 0.013399 | 8 | 8.6813 | s | 14 | 0.97959 | a | | 35 | 6 | Accept | 0.19883 | 0.65282 | 0.015555 | 0.013247 | 13 | 2.418 | s | 9 | 0.093133 | a | | 36 | 6 | Accept | 0.047703 | 0.61038 | 0.015555 | 0.013195 | 7 | 4.4549 | s | 7 | 1.3742e-05 | a | | 37 | 6 | Accept | 0.2922 | 20.739 | 0.015555 | 0.013686 | 498 | 0.7477 | s | 18 | 1.2496e-06 | a | | 38 | 6 | Accept | 0.03113 | 11.814 | 0.015555 | 0.013804 | 287 | 9.474 | r | 17 | 7.4789e-06 | a | | 39 | 6 | Accept | 0.05179 | 82.825 | 0.015555 | 0.014658 | 327 | 8.4596 | r | 100 | 0.99361 | a | | 40 | 6 | Accept | 0.08023 | 0.89041 | 0.015555 | 0.014749 | 28 | 5.3041 | r | 8 | 0.71177 | a | |===========================================================================================================================================================================| | Iter | Active | Eval | Objective | Objective | BestSoFar | BestSoFar | iterlim | lambda | solver | q | lambdareg | coding | | | workers | result | | runtime | (observed) | (estim.) | | | | | | | |===========================================================================================================================================================================| | 41 | 6 | Accept | 0.026725 | 10.287 | 0.015555 | 0.01503 | 13 | 1.3207 | s | 100 | 4.0387e-06 | a | | 42 | 6 | Accept | 0.054087 | 0.87185 | 0.015555 | 0.014909 | 13 | 2.0473 | s | 9 | 7.9662e-05 | o | | 43 | 6 | Accept | 0.061574 | 0.80035 | 0.015555 | 0.015371 | 18 | 2.9059 | r | 5 | 3.0653e-05 | a | | 44 | 6 | Accept | 0.053178 | 1.1839 | 0.015555 | 0.015997 | 20 | 3.5261 | s | 9 | 2.7956e-06 | a | | 45 | 6 | Accept | 0.03253 | 1.5798 | 0.015555 | 0.01643 | 29 | 4.7229 | r | 10 | 0.0023745 | o | | 46 | 6 | Accept | 0.019437 | 4.5849 | 0.015555 | 0.015791 | 12 | 1.2408 | r | 91 | 0.012225 | o | | 47 | 6 | Accept | 0.059445 | 14.142 | 0.015555 | 0.015878 | 439 | 2.1105 | r | 13 | 0.95524 | o | | 48 | 6 | Accept | 0.047275 | 11.728 | 0.015555 | 0.016694 | 488 | 2.6315 | r | 10 | 1.073e-06 | a | | 49 | 6 | Accept | 0.040995 | 4.1608 | 0.015555 | 0.015764 | 292 | 4.4442 | r | 5 | 0.00019596 | o | | 50 | 6 | Accept | 0.084049 | 0.84595 | 0.015555 | 0.016101 | 17 | 4.5231 | r | 12 | 0.94067 | a | | 51 | 6 | Accept | 0.01608 | 9.7893 | 0.015555 | 0.013937 | 27 | 8.5051 | r | 98 | 0.0014041 | o | | 52 | 6 | Accept | 0.046612 | 0.75998 | 0.015555 | 0.013516 | 6 | 3.6319 | r | 15 | 0.050078 | o | | 53 | 6 | Accept | 0.026378 | 3.9473 | 0.015555 | 0.013503 | 5 | 5.451 | s | 86 | 2.2645e-05 | o | | 54 | 6 | Accept | 0.01652 | 5.525 | 0.015555 | 0.013616 | 6 | 3.4626 | r | 99 | 0.0042698 | o | | 55 | 6 | Accept | 0.023626 | 4.3491 | 0.015555 | 0.013606 | 6 | 0.87113 | s | 90 | 4.9252e-06 | o | | 56 | 6 | Accept | 0.035756 | 1.5968 | 0.015555 | 0.013244 | 11 | 2.0662 | r | 26 | 3.9009e-05 | o | | 57 | 6 | Accept | 0.031252 | 9.0684 | 0.015555 | 0.013039 | 331 | 2.3022 | r | 11 | 3.3415e-05 | o | | 58 | 6 | Accept | 0.072441 | 12.75 | 0.015555 | 0.013042 | 492 | 2.5934 | r | 11 | 0.72574 | a | | 59 | 6 | Accept | 0.02269 | 126.16 | 0.015555 | 0.013115 | 491 | 8.0628 | r | 99 | 0.16601 | o | | 60 | 6 | Accept | 0.039891 | 11.467 | 0.015555 | 0.01292 | 497 | 4.2759 | r | 9 | 0.0019962 | o |

__________________________________________________________

Optimization completed.

MaxObjectiveEvaluations of 60 reached.

Total function evaluations: 60

Total elapsed time: 210.4583 seconds

Total objective function evaluation time: 831.0775

Best observed feasible point:

iterlim lambda solver q lambdareg coding

_______ _______ ______ __ __________ ______

9 0.74158 r 97 0.00043623 o

Observed objective function value = 0.015555

Estimated objective function value = 0.019291

Function evaluation time = 7.6923

Best estimated feasible point (according to models):

iterlim lambda solver q lambdareg coding

_______ ______ ______ __ _________ ______

27 8.5051 r 98 0.0014041 o

Estimated objective function value = 0.01292

Estimated function evaluation time = 8.4066

toc

Elapsed time is 211.892362 seconds.

warning('on','stats:classreg:learning:fsutils:Solver:LBFGSUnableToConverge');

The resulting classifier has similar loss (the "Observed objective function value") compared to the classifier using rica for 100 features trained for 400 iterations. To use this classifier, retrieve the best classification model found by bayesopt.

t = templateLinear('Lambda',results.XAtMinObjective.lambda,'Solver','lbfgs'); if results.XAtMinObjective.coding == "o" Cmdl = fitcecoc(NewX,LabelTrain,Learners=t,Coding='onevsone'); else Cmdl = fitcecoc(NewX,LabelTrain,Learners=t,Coding='onevsall'); end

See Also

rica | sparsefilt | ReconstructionICA | SparseFiltering